We all know that delivering an effective quality assurance program has the potential to completely transform agent performance, and the level of service delivered to improve customer satisfaction. Still, it’s often difficult to get buy-in from stakeholders who don’t quite understand its value.

And their reasoning is simple, “Show me the impact this is having on our customers,” or if you’re in a highly regulated environment “Show me the money we’ve avoided in fines.”

In theory, this should be quite easy, but plagued with spreadsheets and cumbersome software it’s often very difficult.

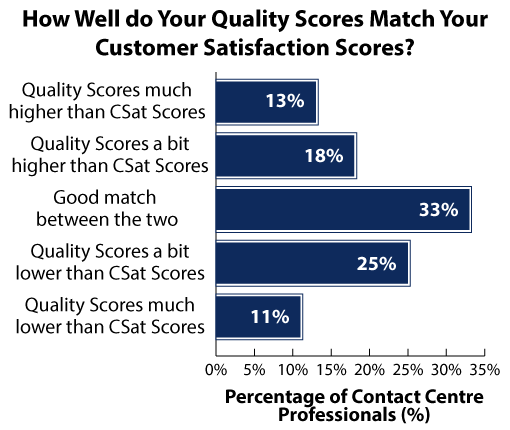

Instead, we present our senior team a Quality score. An internal metric which is a fantastic indicator – so long as you can trust it and it correlates to your CSAT scores. Unfortunately, research by our friends at Call Centre Helper shows the majority (67%) can’t.

Such discrepancies between the two cause senior stakeholders to worry, and their faith in our one metric (Quality score) dissolve.

How can you improve your call center agents’ performance?

More often than not, the Quality Scorecard is where the problem starts. Fix this and your life becomes a much, much easier!

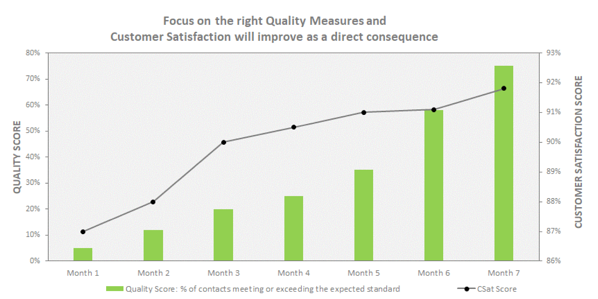

In this blog, we’re going to provide you with our top tips for creating a great scorecard. And for those that make it to the bottom – we’ll demonstrate the results we’ve achieved in the past, and how you can use our quality assurance software to achieve the same.

Tip 1: Get the right balance

First and foremost, it’s imperative that you achieve a good balance across three key areas:

- Customer Experience & Soft Skills

- Process adherence

- Compliance

Too much focus on customer experience and you’re going to be getting lots of repeat calls because customers haven’t been taken through the right processes.

However, focus too heavily on the process adherence without the soft skills, and you may as well be measuring a robot!

Tip two: revisit your scorecards

A lot of scorecards that we’ve seen over the years are very binary.

- Has the agent done X – yes or no.

- Has the agent done Y – yes or no.

Such an approach doesn’t leave any room for differentiating performance or helping develop those soft skills.

Instead, think about the outcome that the line-item is trying to achieve, and measure that.

Old Way:

Did the agent say the customer’s name three times during the call?

New Way:

Did the agent build an appropriate rapport with the customer?

If you’ve already made this change, then think of your quality assurance scoring.

What we’ve tried to do with some of the scoring mechanisms that we’ve deployed over the years expands on the pass/fail approach. For example, it’s not just about whether the agent is passing or failing.

Instead, you should be understanding if they’re meeting the expected standard first and foremost. If not, it’s a customer service coaching opportunity and your scorecard should enable evaluators to mark the line item in this way.

Moving beyond the simple binary approach gives you a much better understanding of your call center agent performance, and actually gives agents the opportunity to exceed and collect good customer feedback. If all you’re doing is measuring your individual agent performance to pass, then there’s nowhere else for them to go.

Reliable, flexible call center agent scorecards are your business partner when it comes to improving customer interactions through robust quality assurance.

Tip 3: change perceptions

So far, we’ve given you a couple of ideas on how to improve your scorecard, and help your call centers to thrive, but if you really want to go above and beyond, this next bit is for you.

Often the forgotten stakeholders, agents and team leaders are key to driving performance improvements. So, it’s on us, as a quality team to change their perceptions about what we do and break down those silos.

Agents know what makes or breaks a customer experience, because they’re on those calls, speaking to your customers everyday – day in, day out, so involve them!

Involve them in your calibration sessions. Involve them when you’re designing your forms and you’ll see perceptions change.

In summary

- Get the right balance.

- Measure the outcome you want to see and score it in the right way.

- Involve your front-line

Incorporate all of this into your call center scorecard design, and we’re pretty certain you’ll see customer experience sky rocket and agent performance improve – just like our example below.